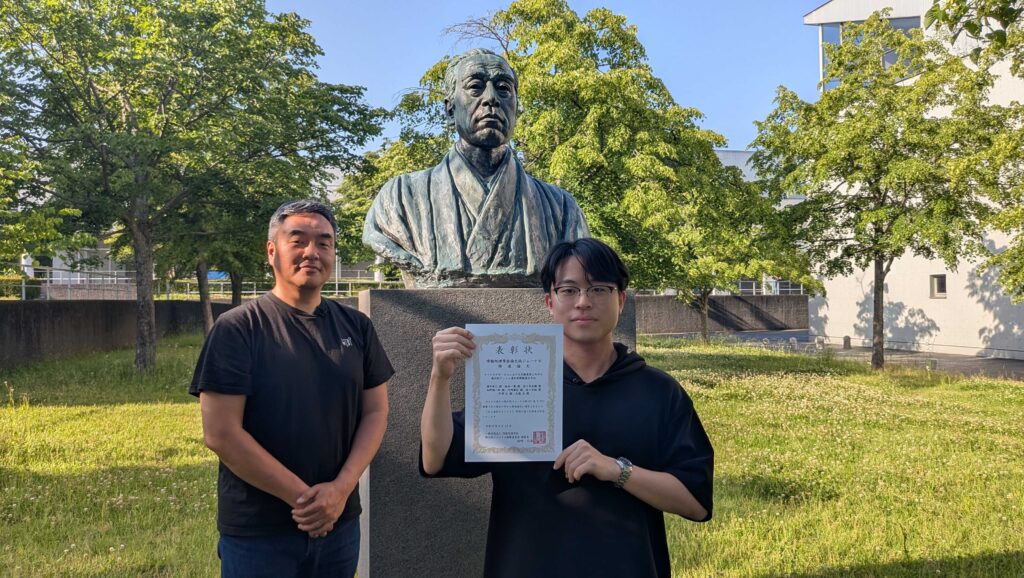

博士課程3年 濱中君の研究「Sensor-Augmented Voice Activity Projection for Enhancing Turn-Taking Prediction」が国際会議 SIGDIAL2026にacceptされました.本研究はNTTコミュニケーション科学基礎研究所との共同研究です.

Abstract:

Voice Activity Projection (VAP) has been actively studied to enable natural turn-taking in spoken dialogue systems, relying primarily on acoustic features. Visual cues such as head movements are also known to contribute to turn-taking prediction; however, camera-based approaches are affected by placement and lighting conditions and are not always reliably available to dialogue systems. As a camera-independent approach for directly capturing head motion, earable devices offer a promising solution.

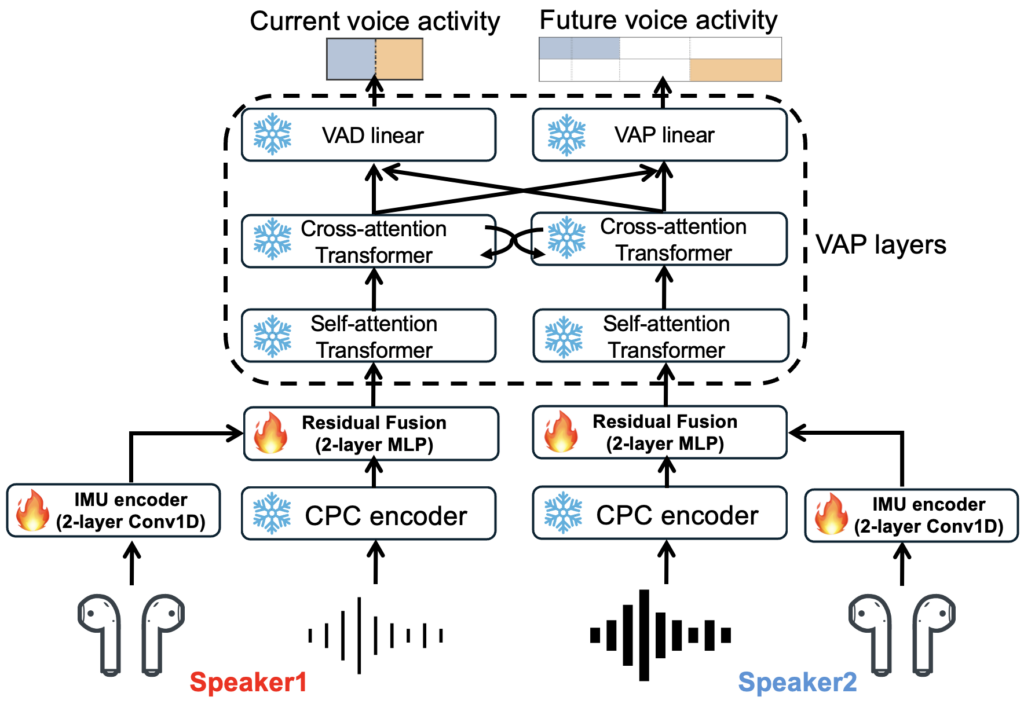

In this study, we propose Sensor-Augmented VAP, a framework that integrates in-ear inertial measurement unit (IMU) signals with a pre-trained VAP model via a lightweight residual fusion module. To validate our proposed method, we collected a dataset pairing conversational audio with in-ear IMU data, comprising 12 dyadic Japanese dialogues recorded using microphones and earbuds. Experiments in speaker-independent and speaker-dependent settings demonstrate that IMU fusion consistently improves weighted F1 score for shift detection and reduces VAP loss over the audio-only baseline. These results confirm that head-motion cues are effective for enhancing turn-taking prediction.